Piper 2, Arcade's new game generator

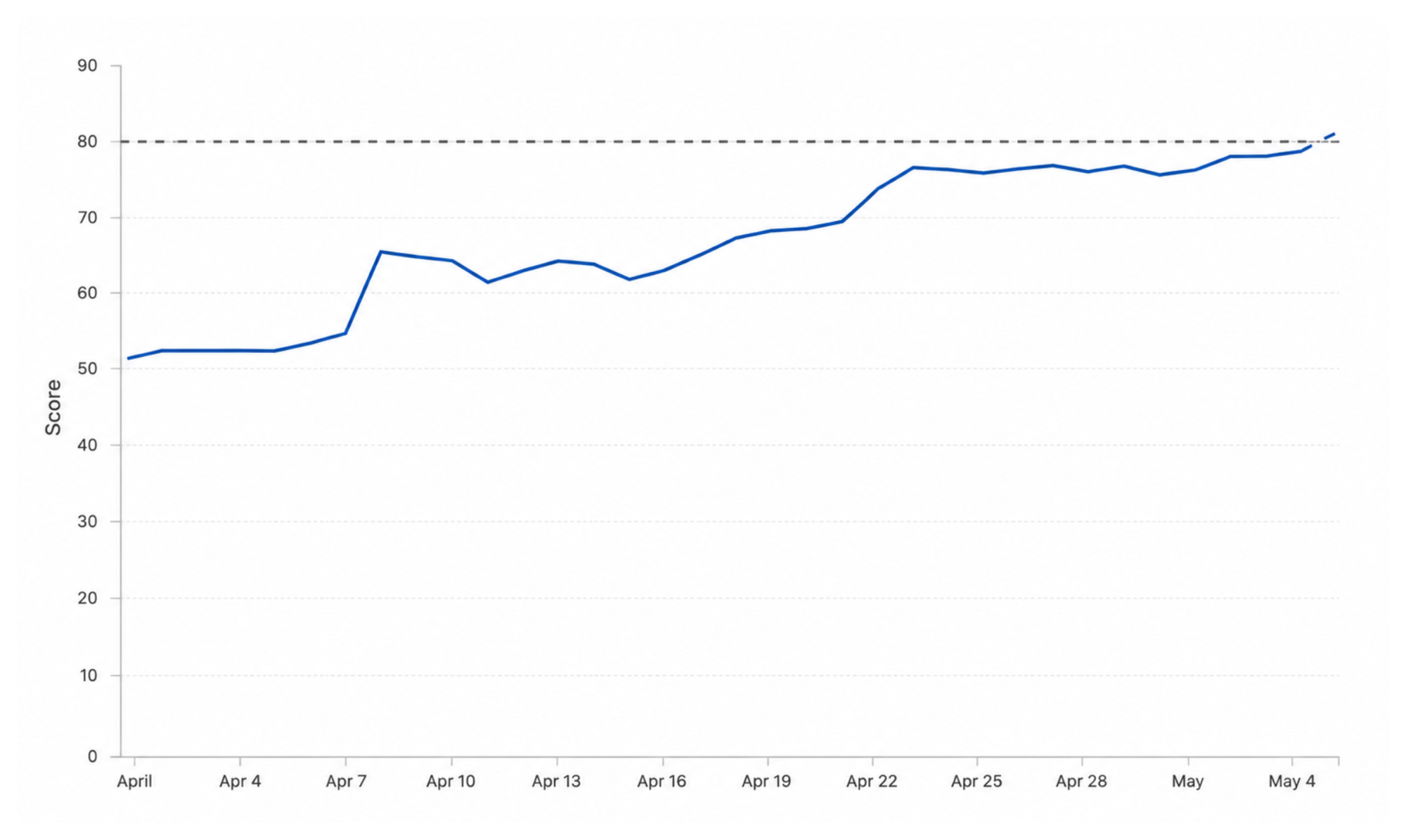

Arcade's new game generator improved from 53% to 80% with better image generation and self-healing content.

When we released Arcade in February, it was based on a promise that teachers could create custom learning games in under a minute. We want to save teachers time and help them create differentiated material for their students.

Implicit in that promise was that the games would be good. It's not enough for the games to be quickly generated, that part is easy, the games had to be high-quality enough that a teacher could use them without much manual editing. Additionally, they had to support audio and images alongside text. Text alone is not sufficient to cover the breadth of subjects taught across grade levels.

Over the past month we have been hard at work improving Piper, our AI game generator. We're thrilled to introduce Piper 2, a substantial jump in quality from our first generator. Below we'll share our approach and what we improved.

Building the evaluator

How do you define if a game is "good"? First we had to define our criteria then build an evaluation system to check against that criteria.

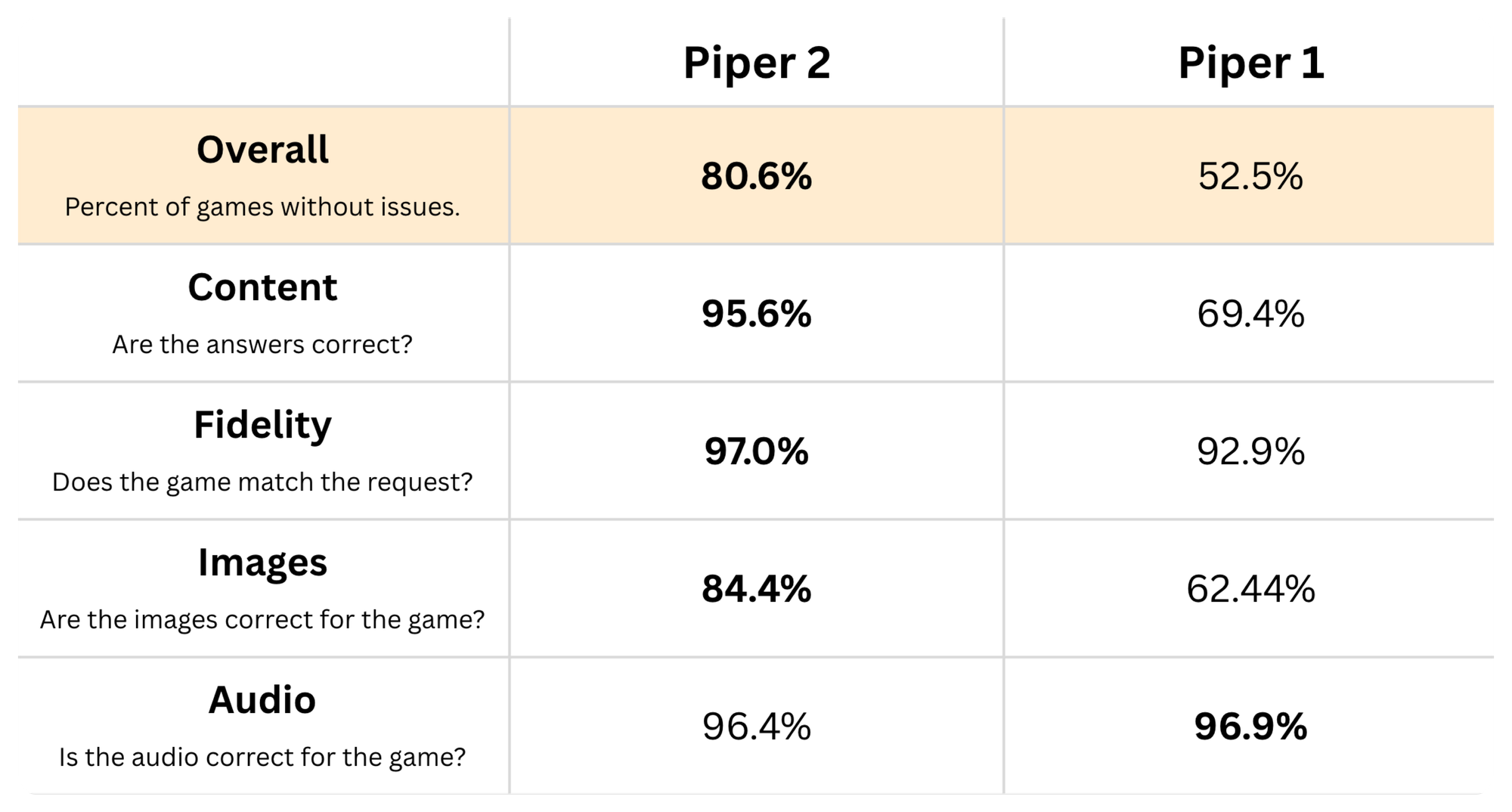

- Content: Are all of the answers completely and exclusively correct?

- Fidelity: Does the game match what the teacher requested?

- Images: Are the images accurate to the content of the game?

- Audio: Does the audio represent the sounds it's supposed to?

If a game failed any of these checks, it failed the entire evaluation.

After our first evaluation run, we found we had a pass rate of 52.5%. Almost half of the games we generated failed in some meaningful way for teachers.

Chasing perfection

Increasing this number became the team's top priority. If we weren't fulfilling that fundamental promise, none of our other efforts mattered. We set an initial goal of 80% pass rate with an ultimate, and likely unattainable, goal of 100%.

We ran some more evaluations and then ran some analysis on the results. What we found were three common failures:

- Low-quality or inaccurate images

- Answers were incorrect or ambiguous

- Game content did not match what was requested

These issues were a large enough portion of our failures that fixing them would get us to our 80% goal.

Picasso: the image agent

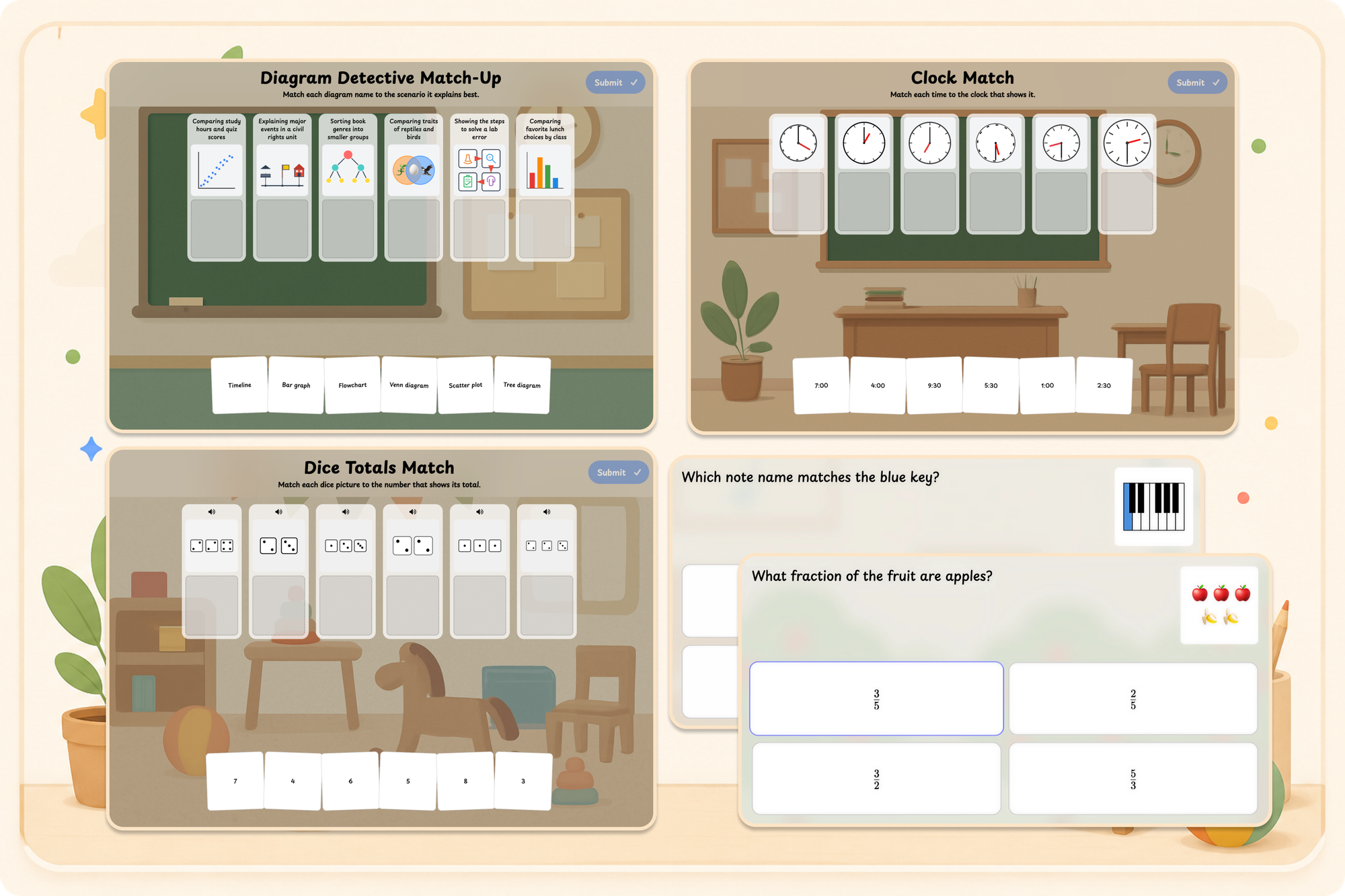

Giving AI the ability to include images alongside the text it generates is a surprisingly difficult problem. We built a dedicated agent, which we call Picasso, that selects from multiple image search and generation tools to find the best image for a given request.

Some image types proved harder than others. Picasso struggled most with diagrammatic images requiring spatial precision and symbolic accuracy: "an analog clock showing 8:27," "a simple electrical circuit with a switch and a light," "4 gold coins." So we gave Picasso new tools purpose-built for these structured visuals, then tuned our prompts so it could reliably choose the right tool for each request.

Some more examples of games using images from our new tools:

Heal thyself

Our two biggest quality problems – incorrect answers and content that drifted from the original request – turned out to share a common fix. Before sending a generated game to the user, we run it through a dedicated checker that flags correctness and adherence issues, then gives Piper a chance to fix itself. We call this self-healing.

You may notice some games generate in about thirty seconds while others take over a minute. The longer ones likely had some issues that needed to be fixed. The self-healing means the game essentially goes through the generation flow more than once.

Game types that used to fail frequently now pass at much higher rates:

- Complicated math and logic problems get their work double-checked and corrected.

- Fill-in-the-blank games are reviewed so words can't ambiguously fit multiple slots.

- Games converted from longer worksheets or lesson plans stay faithful to the source content.

Reaching our goal

These fixes, along with steady improvements to our models and prompts, got us to our 80% pass rate target. But we still have a long way to go. Text-to-speech can mispronounce words or pick the wrong language. Images are often still incorrect or low quality. Piper has no awareness of current events for timely content.

We also recognize that AI generation won't always nail exactly what a teacher has in mind. So alongside continued quality work, we're giving teachers direct editing tools to fix any imperfections themselves.

Arcade is still a very new product and we have a lot of improvements and exciting new features coming shortly. If you'd like to work on Arcade or similar projects in education, we're hiring across a number of roles in San Francisco and Singapore!